Create an XCom for each training_model task.The objective is to create one XCom for each model and fetch the XComs back in the task choose_model to select the best model. Finally, we pick the best model based on the generated accuracies in choose_model. That function randomly generates an accuracy for each model A, B, and C. Each task uses the PythonOperator to execute the function _training_model. Then we dynamically create three tasks, training_model_ with a list comprehension. downloading_data uses the BashOperator to execute a bash command that waits for three seconds. Start_date=datetime(2023, 1, = BashOperator(ĭownloading_data > training_model_task > choose_modelĬreate a file xcom_dag.py and put the code in it. Here is the data pipeline we will use: from airflow import DAGįrom import BashOperatorįrom import PythonOperator Great! Now you know what an XCom is, let’s create your first Airflow XCom! How to use XCom in Airflow? To access XComs, go to the user interface, then Admin and XComs.

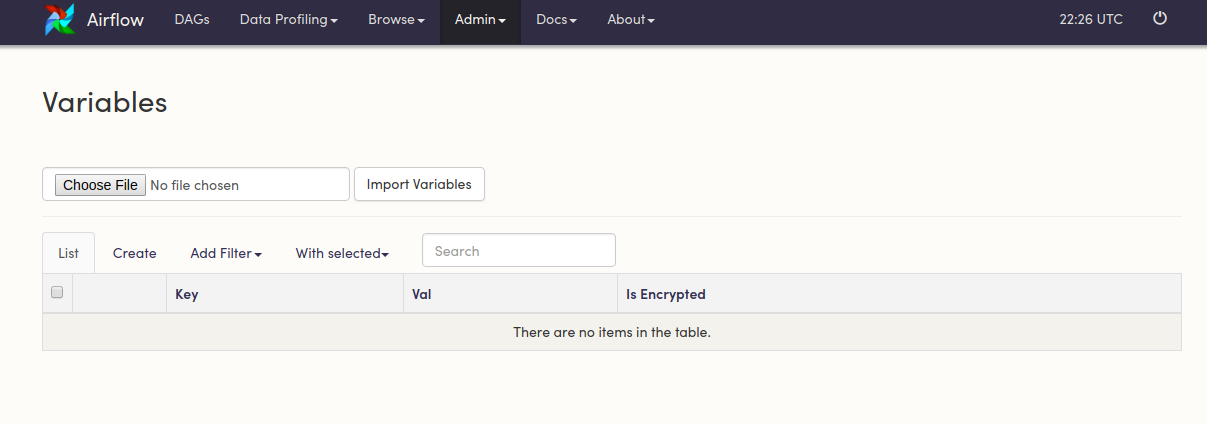

That has some implications that you will see later in this tutorial. Keep in mind that Airflow stores XComs in the database. A dag id: The identifier of the DAG that creates the XCom.A task id: The identifier of the task that creates the XCom.You don’t know what I’m talking about? Check my video about how scheduling works in Airflow. That’s how Airflow avoids fetching an XCom coming from another DAG run. An execution date/Logical date: That date corresponds to the execution date/logical date of the DAG run having generated the XCom.A timestamp: When the XCom was created.If you want to learn more about the differences between JSON/Pickle, click here. Serializing with pickle has been disabled by default to avoid RCE exploits/security issues. That value must be serializable in JSON or pickable. A value: This is what you want to share.A key: This is the identifier of an XCom.You can think of an XCom as a little object with the following fields: XCom stands for “cross-communication” and allows data exchange between tasks. That’s perfectly viable, but is there any native and easier mechanism in Airflow allowing you to do that? One solution could be to store the accuracies in a database and fetch them back in the task choosing_model with an SQL request. How can we get the accuracy of each model in the task choosing_model to pick the best one? In a nutshell, this data pipeline trains different machine learning models based on a dataset and the last task selects the model with the highest accuracy. Let’s imagine you have the following data pipeline: The Airflow XCom concept is not easy, so let me illustrate why it can be helpful for you. As usual, starting with a use case is always good to better explain why you need functionality.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed